MIT Graduate Earns ACM Doctoral Dissertation Award

Hassanieh Developed Highly Efficient Fourier Transform Algorithms for Large Datasets

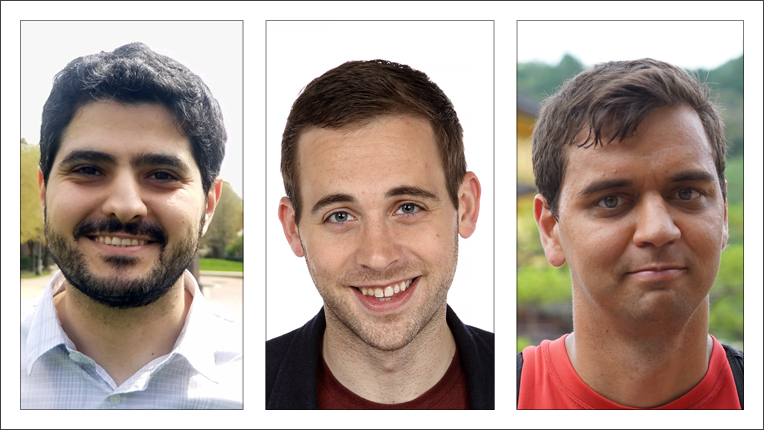

Haitham Hassanieh is the recipient of the Association for Computing Machinery (ACM) 2016 Doctoral Dissertation Award. Hassanieh developed highly efficient algorithms for computing the Sparse Fourier Transform, and demonstrated their applicability in many domains including networks, graphics, medical imaging and biochemistry. In his dissertation, The Sparse Fourier Transform: Theory and Practice, he presented a new way to decrease the amount of computation needed to process data, thus increasing the efficiency of programs in several areas of computing.

In computer science, the Fourier transform is a fundamental tool for processing streams of data. It identifies frequency patterns in the data, a task that has a broad array of applications. For many years, the Fast Fourier Transform (FFT) was considered the most efficient algorithm in this area. With the growth of Big Data, however, the FFT cannot keep up with the massive increase in datasets. In his doctoral dissertation Hassanieh presents the theoretical foundation of the Sparse Fourier Transform (SFT), an algorithm that is more efficient than FFT for data with a limited number of frequencies. He then shows how this new algorithm can be used to build practical systems to solve key problems in six different applications including wireless networks, mobile systems, computer graphics, medical imaging, biochemistry and digital circuits. Hassanieh’s Sparse Fourier Transform can process data at a rate that is 10 to 100 times faster than was possible before, thus greatly increasing the power of networks and devices.

Hassanieh is an Assistant Professor in the Department of Electrical and Computer Engineering and the Department of Computer Science at the University of Illinois at Urbana-Champaign. He received his MS and PhD in Electrical Engineering and Computer Science at the Massachusetts Institute of Technology (MIT). A native of Lebanon, he earned a BE in Computer and Communications Engineering from the American University of Beirut. Hassanieh’s Sparse Fourier Transform algorithm was chosen by MIT Technology Review as one of the top 10 breakthrough technologies of 2012. He has also been recognized with the Sprowls Award for Best Dissertation in Computer Science, and the SIGCOMM Best Paper Award.

Honorable Mention for the 2016 ACM Doctoral Dissertation Award went to Peter Bailis of Stanford University and Veselin Raychev of ETH Zurich.

In Bailis’s dissertation, Coordination Avoidance in Distributed Databases, he addresses a perennial problem in a network of multiple computers working together to achieve a common goal: Is it possible to build systems that scale efficiently (process ever-increasing amounts of data) while ensuring that application data remains provably correct and consistent? These concerns are especially timely as Internet services such as Google and Facebook have led to a vast increase in the global distribution of data. In addressing this problem, Bailis introduces a new framework, invariant confluence, that mitigates the fundamental tradeoffs between coordination and consistency. His dissertation breaks new conceptual ground in the areas of transaction processing and distributed consistency—two areas thought to be fully understood. Bailis is an Assistant Professor of Computer Science at Stanford University. He received a PhD in Computer Science from the University of California, Berkeley and his AB in Computer Science from Harvard College.

Raychev’s dissertation, Learning from Large Codebases, introduces new methods for creating programming tools based on probabilistic models of code that can solve tasks beyond the reach of current methods. As the size of publicly available codebases has grown dramatically in recent years, so has interest in developing programming tools that solve software tasks by learning from these codebases. Raychev’s dissertation takes a novel approach to addressing this challenge that combines advanced techniques in programming languages with machine learning practices. In the thesis, Raychev lays out four separate methods that detail how machine learning approaches can be applied to program analysis in order to produce useful programming tools. These include: code completion with statistical language models; predicting program properties from big code; learning program from noisy data; and learning statistical code completion systems. Raychev’s work is regarded as having the potential to open up several promising new avenues of research in the years to come. Raychev is currently a co-founder and Chief Technology Officer of DeepCode, a company developing artificial intelligence-based programming tools. He received a PhD in Computer Science from ETH Zurich. A native of Bulgaria, he received MS and BS degrees from Sofia University.

Doctoral Dissertation Award Recognizes Young Researchers

Haitham Hassanieh of University of Illinois at Urbana-Champaign has received ACM's 2016 Doctoral Dissertation Award for developing highly efficient algorithms for computing the Sparse Fourier Transform. Honorable Mentions went to Peter Bailis of Stanford University for coordination avoidance in distributed databases, and Veselin Raychev of ETH Zurich for creating programming tools based on probabilistic models of code that can solve tasks beyond the reach of current methods.